Overview

The

|

|

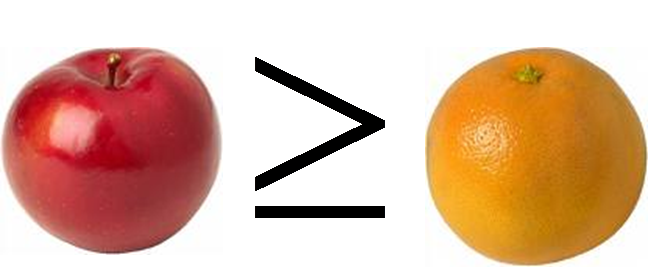

How do we know if a conference X is better than a conference Y? In what aspect? How can a system recommend an ideal venue to submit your paper? In an interdisciplinary organization, how do we know if a scholar A in a field X is better than a scholar B in a field Y? If so, what does that mean? Given your research interests, which professor is going to be your best advisor? Are we comparing Apples against Oranges? Can we do it?

All these are inherently difficult questions to answer due to many fuzziness tangled in the questions. However, the gist of these questions can be rephrased to the task of "how to measure the distance between the bibliographic entities X and Y?" To address these challenges, in the AppleRank project, we aim at building a novel unifying framework to rank bibliographic entities (e.g., academic venues, authors, research groups, or articles) better. Bibliographic entities are termed as apples in the project, thus AppleRank.

In particular,

- Metric issue: Is conventional citation-based metric such as Impact Factor the best metric to judge the goodness of bibliographic entities? Can we do better?

- Computational issue: Given a set of metric functions and a set of large bibiographi entities, how to maintain them? Incrementally?

- Test issue: How can we test the developed metric? Large-scale mechanical testing in CiteSeer? Or, human-subject testing?

$ Last generated: Wed May 16 18:18:30 2007 EST $