Overview

The

|

|

In the (Data) Linkage project, a sequel of the Quagga data cleaning project, we re-visit the extensively-studied traditional (record) linkage problem of identifying matching entities in a collection, and argue that novel solutions be needed to cope with novel challenges. For instance, we pursue the following novel directions:

- Web based linkage: When one cannot determine well if two entities are matching or not due to the lack of evidences, we propose to use the Web as the ultimate knowledge source.

- Parallel linkage: By turning existing data linkage solutions to parallel programs, one can achieve substantial speed-up. However, due to intricate interplay of match vs. merge operations in the data linkage, the parallelization requires a careful design.

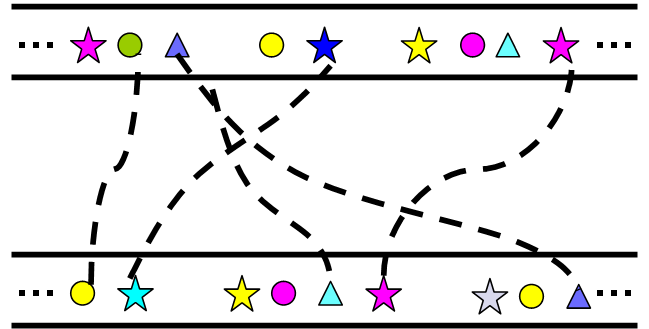

- Group linkage: When entities to match are no longer simple records but have non-trivial internal properties or structures (such as groups), its exploitation can bring a significant improvement to the data linkage.

- Adaptive linkage: Often, in existing data linkage solutions, various parameters need to be set once (by users) and do not change during the execution. However, by adaptively changing the values to maximize objective functions, one can substantially reduce false negatives.

$ Last generated: Fri Jul 6 00:45:37 2007 EST $